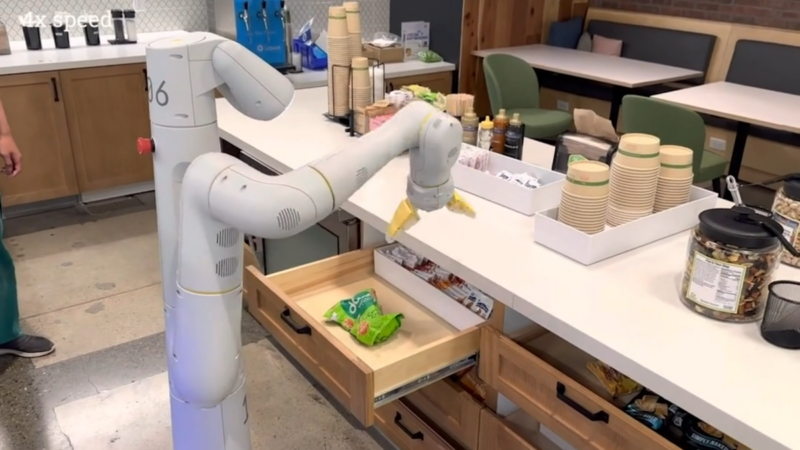

Enlarge / A robotic arm controlled by PaLM-E reaches for a bag of chips in a demonstration video. (credit: Google Research)

On Monday, a group of AI researchers from Google and the Technical University of Berlin unveiled PaLM-E, a multimodal embodied visual-language model (VLM) with 562 billion parameters that integrates vision and language for robotic control. They claim it is the largest VLM ever developed and that it can perform a variety of tasks without the need for retraining.

According to Google, when given a high-level command, such as "bring me the rice chips from the drawer," PaLM-E can generate a plan of action for a mobile robot platform with an arm (developed by Google Robotics) and execute the actions by itself.

PaLM-E does this by analyzing data from the robot's camera without needing a pre-processed scene representation. This eliminates the need for a human to pre-process or annotate the data and allows for more autonomous robotic control.

Read 11 remaining paragraphs | Comments

https://ift.tt/umQMvNp

Comments